Audio, Recording And PlaybackA number of factors can affect the sound quality of a reproduction or recording, including the equipment used to make it, the processing done to the recording, the equipment used to reproduce it, and of course the listening environment used to reproduce it. In many cases processing such as equalization including such things as bass, treble and timbre, also dynamic range compression and stereo processing may be applied to create audio that can sound very different from that of the master recording, but may be looked upon as more agreeable to a listener. While in other situations, the goal may be to reproduce audio as closely as possible to the original sound, such as gun fire, explosions or wild life for example. When applied to specific electronic devices, such as loudspeakers, microphones, amplifiers or headphones sound quality usually refers to accuracy, with higher quality devices providing higher accuracy reproduction, thus better quality. Between the invention of the phonograph and the introduction of digital media, probably the most important event in the history of sound recording was the introduction of "electrical recording", in which a microphone was used to convert the sound into an electrical signal that was amplified and used to actuate the recording stylus. Recorders and players have moved from the large two and four-track tape machines to digital recorders. Digital recordings can be downloaded onto computers and portable players as audio files. Some equipment would include TV, MP3 Player, radios, computers and gaming consoles. FILE FORMATS Most people are familiar with the commonly used file formats – for music it’s wmv and mp3,for video, aviand mpeg, while for images its gif and jpeg. Unfortunately, these common formats are not the only ones we use reguraly. It seems as though there are many file formats that accomplish the same task which is saving a bunch of data. File Format: a specific way to encode data that is to be saved as a file. It’s important to know that the file format does no encoding on its own – the encoding is left up to the codecs. The different file formats can be found below: aiff /.wav .aiff /.wav – These are both uncompressed, lossless formats, which means it takes about 10MB to save a minute’s worth of music. The aiff was developed for Apple’s OSX. .aac Some argue that AAC produces the same quality audio at 96 Kbits/s as an mp3 does at 128 Kbit/s, but with the recent developments in mp3 codecs (particularly LAME), mp3s have performed far better in listening tests against AAC than in previous years. That aside, when it comes to sound quality to file size ratio, AAC beats MP3. .ogg .ogg – Vorbis, which is the name of Ogg’s audio format, is an open source lossy compression format that is favored by developers of free software for its patent-free nature. Despite its claims of being able to produce better sounding music at smaller file sizes, Vorbis is not widely used because of its slow encoding time and the lack of native support from popular music players such as iTunes and Winamp. However, many video game makers and programmers have begun using Vorbis because it is open source, and thus does not demand licensing fees like mp3 and aac do. SAMPLE RATES, WAVE FORM, PITCH AND HERTZ Waveform, pitch and hertz have been covered in detail in the theory of sound page however to recap I have also included information below on the subject. Sample rate is the number of samples of audio carried per second, measured in Hz or kHz. For example, 36 300 samples per second can be expressed as either 36 300 Hz, or 36.3 kHz. Bandwidth is the difference between the highest and lowest frequencies carried in an audio stream. The sample rate of playback or recording determines the maximum audio frequency that can be reproduced. For audio work, bandwidth is normally about 20 Hz less than the highest recorded frequency, so for functional purposes they can be treated as the same thing. Ergo the details are used interchangeably here. The term bandwidth may be applied to the frequency content of an audio signal stream, or the frequency ability of audio hardware or software. A waveform is an image that represents an audio signal or recording. It shows the changes in amplitude over a certain amount of time. The amplitude of the signal is measured on the y-axis (vertically), while time is measured on the x-axis (horizontally). Most audio recording programs show waveforms to give the user a visual idea of what has been recorded. If the waveform is very low and not pronounced, the recording was probably very soft. If the waveform almost fills the entire image it may have been recorded with the levels set too high. Changes in a waveform are also good indicators as too when certain parts of a recording take place. For example, the waveform may be large when there are drums, guitars and keyboards playing but may become much smaller when there is just a vocalist singing. This visual representation enables audio producers to locate certain parts of a song without even listening to the recording. A decibel is used to test audibility, or how loud something sounds. Further explained it is a scale used in acoustics (and other subjects) to give an indication of magnitude, where for example,100 decibels is loud and 10 decibels is quiet. So you could have a sound pressure level for example that is 80 decibels at 1000 hertz, or 80 decibels (dB) at 1 kHz. That means if you hear as sound that causes your ear drum to vibrate 1000 times per second then the magnitude of that particular sound pressure is 80 decibels. A Hertz is one repetition per second, named after Henrich Hertz. So 1 kilohertz = 1000 hertz (Hz) Pitch is a perceptual property that allows the ordering of sounds on a frequency-related scale. Pitches are compared as "higher" and "lower" in the sense associated with musical melodies, which require "sound whose frequency is clear and stable enough to be heard. STEREO, MONO AND SUROUND SOUND Stereo and Mono are two classifications of reproduced sound. Mono means to describe sound that is only from one channel while stereo uses 2 or more channels to provide an experience much like being in the same room where the sound was created. Listening to a stereo sound allows you to distinguish which sound is coming from which direction. Mono is still widely used today in situations where stereo only takes up bandwidth and offers no advantages. The equipment needed to record stereo sound is also a little bit more expensive and complicated compared to equipment for mono. Recording mono sound requires only a single microphone and the data it acquires is automatically stored in magnetic tape or converted to digital formats for storage. For stereo, you are required to have multiple microphones along with the equipment needed to splice the multiple channels together in order to create a single sound stream. The most noticeable use of stereo sound is in music where multiple sources of sound are present. If you have great hearing you may be able to distinguish the different sounds bands use. This is what stereo tries to replicate with the use of multiple channels and what mono is completely unable to do. Surround sound is appropriately named due to the fact it is surrounding the listener with an acoustic environment that goes beyond the two-channel stereo experience of yesteryear. Today’s home theatre systems place the listener inside a virtual landscape of sound. In real life, we experience sound in the context of ambient noises. The first incarnation of surround sound was surround sound 5.1, which included five speakers and one subwoofer for delivering bass sounds. This is also referred to as Dolby Digital and Digital theatre Systems (DTS) sound. |

SAMPLE RATES, WAVE FORM, PITCH AND HERTZ

Waveform, pitch and hertz have been covered in detail in the theory of sound page however to recap I have also included information below on the subject.

Sample rate is the number of samples of audio carried per second, measured in Hz or kHz. For example, 36 300 samples per second can be expressed as either 36 300 Hz, or 36.3 kHz.

Bandwidth is the difference between the highest and lowest frequencies carried in an audio stream. The sample rate of playback or recording determines the maximum audio frequency that can be reproduced. For audio work, bandwidth is normally about 20 Hz less than the highest recorded frequency, so for functional purposes they can be treated as the same thing. Ergo the details are used interchangeably here. The term bandwidth may be applied to the frequency content of an audio signal stream, or the frequency ability of audio hardware or software.

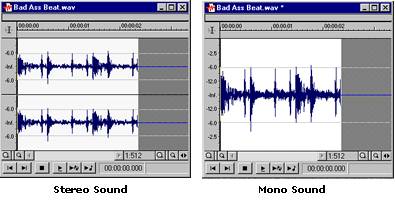

A waveform is an image that represents an audio signal or recording. It shows the changes in amplitude over a certain amount of time. The amplitude of the signal is measured on the y-axis (vertically), while time is measured on the x-axis (horizontally).

Most audio recording programs show waveforms to give the user a visual idea of what has been recorded. If the waveform is very low and not pronounced, the recording was probably very soft. If the waveform almost fills the entire image it may have been recorded with the levels set too high. Changes in a waveform are also good indicators as too when certain parts of a recording take place. For example, the waveform may be large when there are drums, guitars and keyboards playing but may become much smaller when there is just a vocalist singing. This visual representation enables audio producers to locate certain parts of a song without even listening to the recording.

A decibel is used to test audibility, or how loud something

sounds.

In more detail it is a scale used in acoustics (and other subjects) to give an indication of magnitude, where for example, 20 decibels is quiet and 100 decibels is loud.

So you could have a sound pressure level for example that is 80 decibels at 1000 hertz, or 80 decibels (dB) at 1 kHz. That means if you hear as sound that causes your ear drum to vibrate 1000 times per second then the magnitude of that particular sound pressure is 80 decibels.

A Hertz is one repetition (cycle) per second, named after Henrich Hertz. So 1 kilohertz (kHz) = 1000 hertz (Hz)

Pitch is a perceptual property that allows the ordering of sounds on a frequency-related scale. Pitches are compared as "higher" and "lower" in the sense associated with musical melodies, which require "sound whose frequency is clear and stable enough to be heard.

STEREO, MONO AND SUROUND SOUND

Stereo and Mono are two classifications of reproduced sound. Mono means to describe sound that is only from one channel while stereo uses 2 or more channels to provide an experience much like being in the same room where the sound was created. Listening to a stereo sound allows you to distinguish which sound is coming from which direction. This gives listeners a more natural experience compared to where the sound comes from a single direction. Mono is still widely used today in situations where stereo only takes up bandwidth and offers no advantages. An example is in voice communications like in talk

radio. The equipment needed to record stereo sound is also a little bit more expensive and complicated compared to equipment for mono. Recording mono sound requires only a single microphone and the data it acquires is automatically stored in magnetic tape or converted to digital formats for storage. For stereo, you are required to have multiple microphones along with the equipment needed to splice the multiple channels together in order to create a single sound stream that a player can break apart into the original channels. This is why most hand-held sound recorders can only record sound in mono since stereo recording is not really needed for the applications they are used for.

The most noticeable use of stereo sound is in music where multiple sources of sound are present. If you have great hearing you may be able to distinguish the different sounds bands use. This is what stereo tries to replicate with the use of multiple channels and what mono is completely unable to do. Surround sound is appropriately named due to the fact it is surrounding the listener with an acoustic environment that goes beyond the two-channel stereo experience of yesteryear. Today’s home theatre systems place the listener inside a virtual landscape of sound.

In real life, we experience sound in the context of ambient noises. The first incarnation of surround sound was surround sound 5.1, which included five speakers and one subwoofer for delivering bass sounds. This is also referred to as Dolby Digital and Digital theatre Systems (DTS) sound.

Waveform, pitch and hertz have been covered in detail in the theory of sound page however to recap I have also included information below on the subject.

Sample rate is the number of samples of audio carried per second, measured in Hz or kHz. For example, 36 300 samples per second can be expressed as either 36 300 Hz, or 36.3 kHz.

Bandwidth is the difference between the highest and lowest frequencies carried in an audio stream. The sample rate of playback or recording determines the maximum audio frequency that can be reproduced. For audio work, bandwidth is normally about 20 Hz less than the highest recorded frequency, so for functional purposes they can be treated as the same thing. Ergo the details are used interchangeably here. The term bandwidth may be applied to the frequency content of an audio signal stream, or the frequency ability of audio hardware or software.

A waveform is an image that represents an audio signal or recording. It shows the changes in amplitude over a certain amount of time. The amplitude of the signal is measured on the y-axis (vertically), while time is measured on the x-axis (horizontally).

Most audio recording programs show waveforms to give the user a visual idea of what has been recorded. If the waveform is very low and not pronounced, the recording was probably very soft. If the waveform almost fills the entire image it may have been recorded with the levels set too high. Changes in a waveform are also good indicators as too when certain parts of a recording take place. For example, the waveform may be large when there are drums, guitars and keyboards playing but may become much smaller when there is just a vocalist singing. This visual representation enables audio producers to locate certain parts of a song without even listening to the recording.

A decibel is used to test audibility, or how loud something

sounds.

In more detail it is a scale used in acoustics (and other subjects) to give an indication of magnitude, where for example, 20 decibels is quiet and 100 decibels is loud.

So you could have a sound pressure level for example that is 80 decibels at 1000 hertz, or 80 decibels (dB) at 1 kHz. That means if you hear as sound that causes your ear drum to vibrate 1000 times per second then the magnitude of that particular sound pressure is 80 decibels.

A Hertz is one repetition (cycle) per second, named after Henrich Hertz. So 1 kilohertz (kHz) = 1000 hertz (Hz)

Pitch is a perceptual property that allows the ordering of sounds on a frequency-related scale. Pitches are compared as "higher" and "lower" in the sense associated with musical melodies, which require "sound whose frequency is clear and stable enough to be heard.

STEREO, MONO AND SUROUND SOUND

Stereo and Mono are two classifications of reproduced sound. Mono means to describe sound that is only from one channel while stereo uses 2 or more channels to provide an experience much like being in the same room where the sound was created. Listening to a stereo sound allows you to distinguish which sound is coming from which direction. This gives listeners a more natural experience compared to where the sound comes from a single direction. Mono is still widely used today in situations where stereo only takes up bandwidth and offers no advantages. An example is in voice communications like in talk

radio. The equipment needed to record stereo sound is also a little bit more expensive and complicated compared to equipment for mono. Recording mono sound requires only a single microphone and the data it acquires is automatically stored in magnetic tape or converted to digital formats for storage. For stereo, you are required to have multiple microphones along with the equipment needed to splice the multiple channels together in order to create a single sound stream that a player can break apart into the original channels. This is why most hand-held sound recorders can only record sound in mono since stereo recording is not really needed for the applications they are used for.

The most noticeable use of stereo sound is in music where multiple sources of sound are present. If you have great hearing you may be able to distinguish the different sounds bands use. This is what stereo tries to replicate with the use of multiple channels and what mono is completely unable to do. Surround sound is appropriately named due to the fact it is surrounding the listener with an acoustic environment that goes beyond the two-channel stereo experience of yesteryear. Today’s home theatre systems place the listener inside a virtual landscape of sound.

In real life, we experience sound in the context of ambient noises. The first incarnation of surround sound was surround sound 5.1, which included five speakers and one subwoofer for delivering bass sounds. This is also referred to as Dolby Digital and Digital theatre Systems (DTS) sound.